Web Scraping Python Libraries

Web Scraping with Python Code Samples. These code samples are for the book Web Scraping with Python 2nd Edition. If you're looking for the first edition code files, they can be found in the v1 directory. Most code for the second edition is contained in Jupyter notebooks. Although these files can be viewed directly in your browser in Github.

- Python Web Scraping Tutorial

- Python Web Scraping Resources

In this tutorial, we will talk about Python web scraping and how to scrape web pages using multiple libraries such as Beautiful Soup, Selenium, and some other magic tools like PhantomJS. You’ll learn how to scrape static web pages, dynamic pages (Ajax loaded content), iframes, get specific HTML elements, how to handle cookies, and much more. 22 hours ago You Cannot Miss These 8 Python Libraries analyticsvidhya.com - kaustubh1828. 13h. ArticleVideo Book This article was published as a part of the Data Science Blogathon. Python is an all-time favorite language for beginners. Web scraping is a common and effective way of collecting data for projects and for work. In this guide, we’ll be touring the essential stack of Python web scraping libraries. Why only 5 libraries? There are dozens of packages for web scraping out there but you only need a handful to be able to scrape almost any site. This is an opinionated. The Urllib is a package in the Python’s standard libraries with modules for handling URLs.

- Selected Reading

In this chapter, let us learn various Python modules that we can use for web scraping.

Python Development Environments using virtualenv

Virtualenv is a tool to create isolated Python environments. With the help of virtualenv, we can create a folder that contains all necessary executables to use the packages that our Python project requires. It also allows us to add and modify Python modules without access to the global installation.

You can use the following command to install virtualenv −

Now, we need to create a directory which will represent the project with the help of following command −

Now, enter into that directory with the help of this following command −

Now, we need to initialize virtual environment folder of our choice as follows −

Now, activate the virtual environment with the command given below. Once successfully activated, you will see the name of it on the left hand side in brackets.

Python Web Scraper Tutorial

We can install any module in this environment as follows −

For deactivating the virtual environment, we can use the following command −

You can see that (websc) has been deactivated.

Python Modules for Web Scraping

Web scraping is the process of constructing an agent which can extract, parse, download and organize useful information from the web automatically. In other words, instead of manually saving the data from websites, the web scraping software will automatically load and extract data from multiple websites as per our requirement.

In this section, we are going to discuss about useful Python libraries for web scraping.

Requests

It is a simple python web scraping library. It is an efficient HTTP library used for accessing web pages. With the help of Requests, we can get the raw HTML of web pages which can then be parsed for retrieving the data. Before using requests, let us understand its installation.

Installing Requests

We can install it in either on our virtual environment or on the global installation. With the help of pip command, we can easily install it as follows −

Web Scraping Python Libraries Free

Example

In this example, we are making a GET HTTP request for a web page. For this we need to first import requests library as follows −

In this following line of code, we use requests to make a GET HTTP requests for the url: https://authoraditiagarwal.com/ by making a GET request.

Now we can retrieve the content by using .text property as follows −

Observe that in the following output, we got the first 200 characters.

Urllib3

It is another Python library that can be used for retrieving data from URLs similar to the requests library. You can read more on this at its technical documentation athttps://urllib3.readthedocs.io/en/latest/.

Installing Urllib3

Using the pip command, we can install urllib3 either in our virtual environment or in global installation.

Example: Scraping using Urllib3 and BeautifulSoup

In the following example, we are scraping the web page by using Urllib3 and BeautifulSoup. We are using Urllib3 at the place of requests library for getting the raw data (HTML) from web page. Then we are using BeautifulSoup for parsing that HTML data.

This is the output you will observe when you run this code −

Selenium

It is an open source automated testing suite for web applications across different browsers and platforms. It is not a single tool but a suite of software. We have selenium bindings for Python, Java, C#, Ruby and JavaScript. Here we are going to perform web scraping by using selenium and its Python bindings. You can learn more about Selenium with Java on the link Selenium.

Selenium Python bindings provide a convenient API to access Selenium WebDrivers like Firefox, IE, Chrome, Remote etc. The current supported Python versions are 2.7, 3.5 and above.

Installing Selenium

Using the pip command, we can install urllib3 either in our virtual environment or in global installation.

As selenium requires a driver to interface with the chosen browser, we need to download it. The following table shows different browsers and their links for downloading the same.

Chrome |

Edge |

Firefox |

Safari |

Example

This example shows web scraping using selenium. It can also be used for testing which is called selenium testing.

After downloading the particular driver for the specified version of browser, we need to do programming in Python.

First, need to import webdriver from selenium as follows −

Now, provide the path of web driver which we have downloaded as per our requirement −

Now, provide the url which we want to open in that web browser now controlled by our Python script.

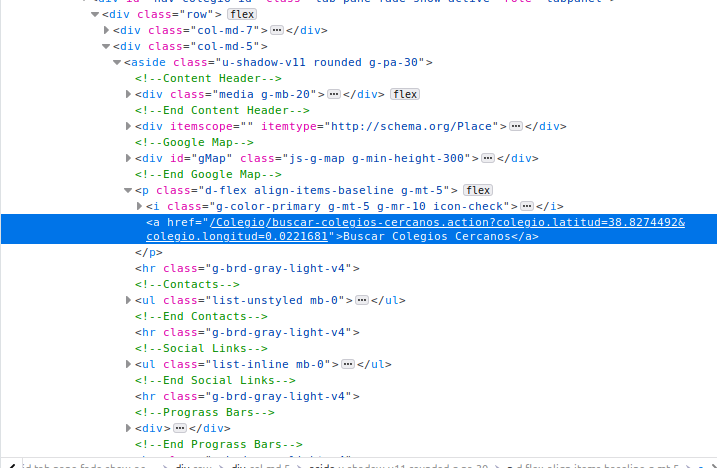

We can also scrape a particular element by providing the xpath as provided in lxml.

You can check the browser, controlled by Python script, for output.

Scrapy

Web Scraping Python Libraries Using

Scrapy is a fast, open-source web crawling framework written in Python, used to extract the data from the web page with the help of selectors based on XPath. Scrapy was first released on June 26, 2008 licensed under BSD, with a milestone 1.0 releasing in June 2015. It provides us all the tools we need to extract, process and structure the data from websites.

Installing Scrapy

Web Scraping Python Libraries Tutorial

Using the pip command, we can install urllib3 either in our virtual environment or in global installation.

Python Website Scraping

For more detail study of Scrapy you can go to the linkScrapy